If you're not monitoring your server's storage use, it can quickly run away from you. Today's guide shows you how to keep an eye on things with an introduction to LVM thin provisions and monitoring disk use with Diskover, lvs, and Grafana utilities.

Case Study

Previously, I've discussed adding SMB storage to Proxmox, which I use on my virtualization server to store large static files such as OS installation isos and backup images of my VMs.

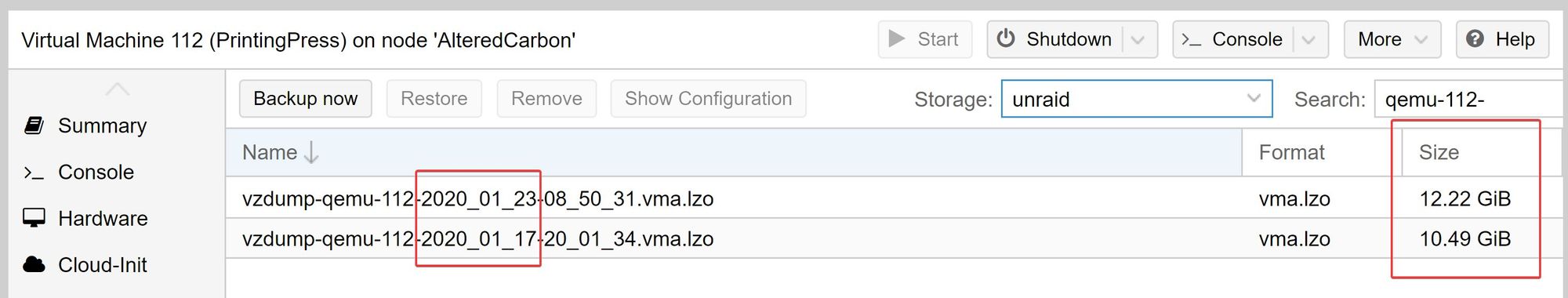

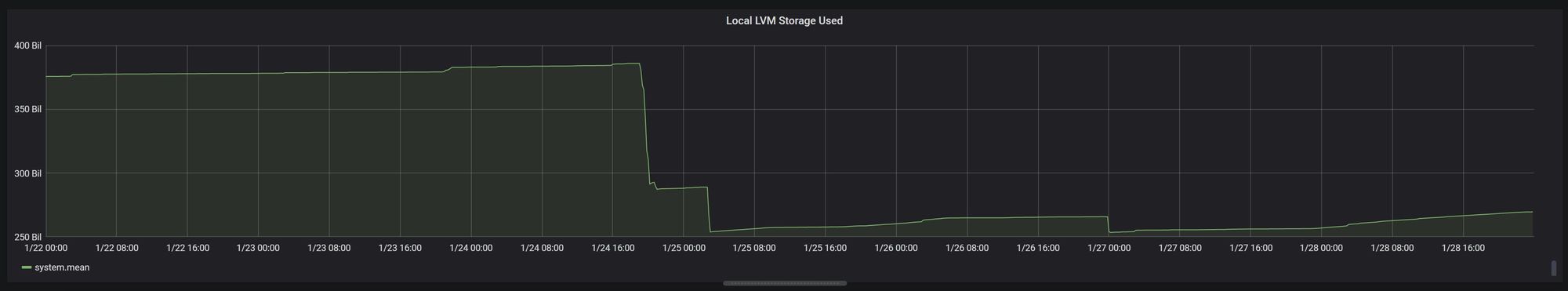

I back up my VMs at least once, if not twice a week, depending on the VM. However, over the course of several weeks, I have noticed this concerning trend in the size of my backup images:

In less than a week, my backup image had grown almost 2 GB! Seeing as how I only publish a post about once per week, and the size of my post's images total maybe a few megabytes, this was extremely concerning. Even more concerning was that I had storage growth like this across the board for my VMs (in hindsight, this turned out to be an important clue which we'll see later).

In today's article, I will show you how I monitor data usage (and growth) on my servers and how to use this information to troubleshoot such a case.

Thin Provisioning

Background

First, let's start off with some background information about my virtualization server. The first thing you need to know is that I use LVM thin pools for VM storage on my Proxmox server.

If you're not familiar with thin provisioning, its chief advantage is that it allows you to "overcommit" the disk space given to an individual VM, in much the same way that we also overcommit CPU cores and RAM to VMs. This is because, at any given time, an individual VM is unlikely to need its full resources, i.e. 100% CPU utilization and 100% RAM usage and typically only requires a fraction of its allocated resources.

In virtualization we take advantage of this by "overpromising" CPUs to various VMs. For example, on a 24 core server, we may give 8 cores to one VM, 8 more cores to another, and so on until we've actually assigned a sum across all of our VMs that far exceeds our 24 physical cores. Depending on the load of the VMs on a given host, it's not uncommon to see a total of 2-4x as many vCPUs than physically exist on a given host. (N.B.: For any individual VM though, you should never assign more vCPUs than physically exist on the host; that would be silly and tanks performance).

The same idea applies to storage with thin provisioning.

Let's say you have a few VMs that you anticipate won't need to use more than 64 GB of storage individually. With multiple VMs on the same storage, that's potentially a lot of space to reserve in one giant block, especially if it may not ever be used.

Thin provisioning allows you to give each individual VM that 64 GB of disk space, but without necessarily having to carve out the full 64 GB of disk space in storage for it. The thin provision only takes up what is used, so among your various VMs, you could promise them 2 TB of total storage across them, on a 1 TB drive.

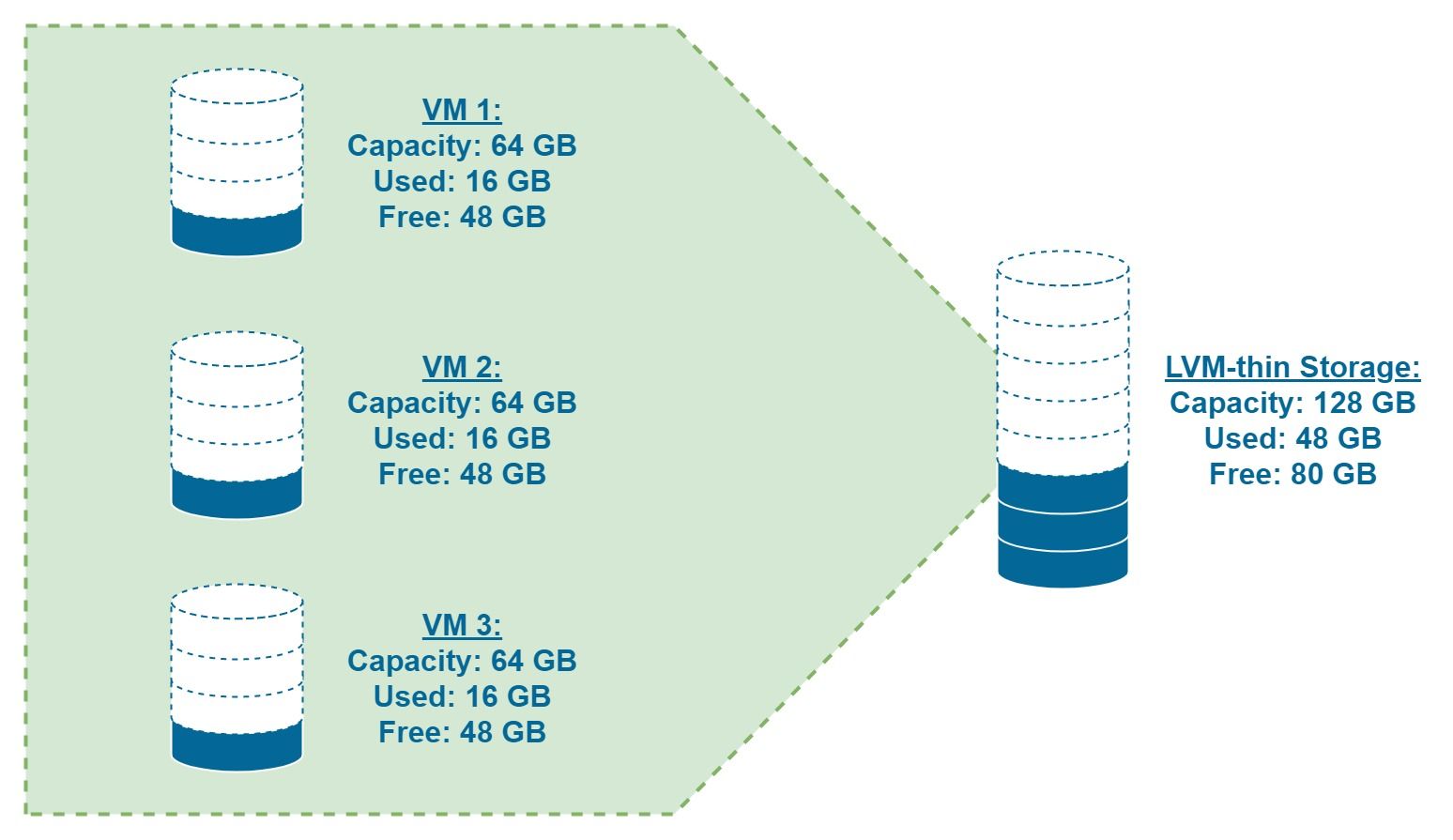

The example below illustrates this, albeit with 128GB of physical storage (titled "LVM-thin" based on Proxmox's naming convention) for the sake of clarity:

Note how each individual VM has 64 GB of disk storage extended to it, but is currently only using 16 GB. Without thin provisioning, 192 GB of storage would be required (64 GB * 3 VMs = 192 GB), but with thin provisioning only the 48 GB of storage in use is actually needed (16 GB used * 3 VMs = 48 GB).

The Problem with Thin Provisioning

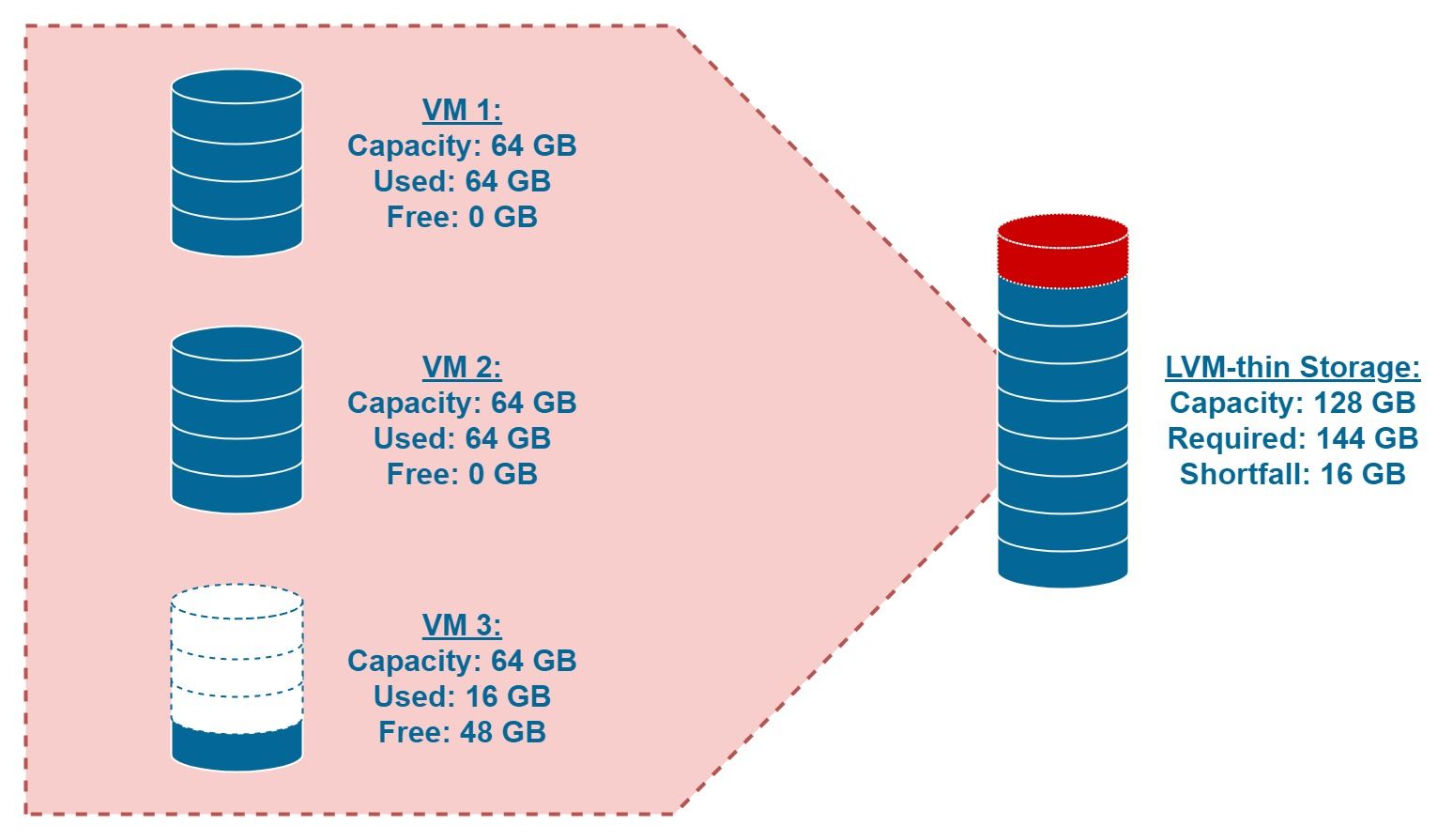

The disaster arises though if those VMs call your bluff and actually call in the storage that's been promised to them. With CPU virtualization, the VMs can just steal CPU cycles from each other, but with storage, there's no such analogy here.

This is the server admin's equivalent of having a run on the bank. You can't make storage out of thin air or borrow it from someplace else when you run out, hence the importance of being vigilant in where your storage is going.

Therefore, if you use thin provisioning on your virtualization servers, you should closely monitor your storage usage, as filling up an LVM thin pool can be disastrous (and, if you're not paying attention to it, extremely easy to do accidentally).

Monitoring LVM Storage Utilization

Grafana

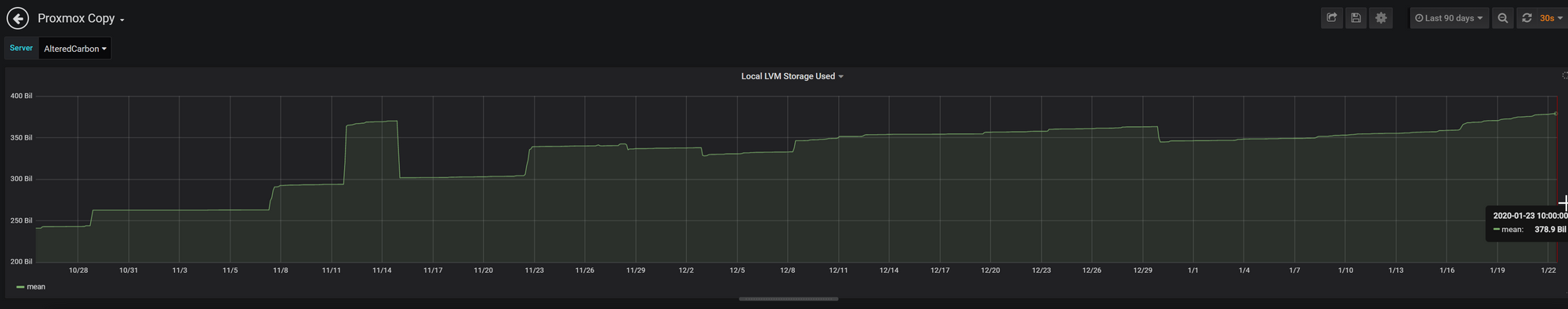

What's the easiest way to monitor LVM usage? For a quick visualization, I prefer Grafana since it's easy to deploy along with InfluxDB in an LXC container. (Future article in the works).

As you can see above, the LVM thin pool is being rapidly consumed. At a rate of over 40 GB/month, we could hit storage full in under a year. Not good.

lvs

Alternatively, if you're a Linux purist and prefer the command line (or just don't want to go through all the extra setup of installing/configuring Grafana/InfluxDB), you can simply use the following command:

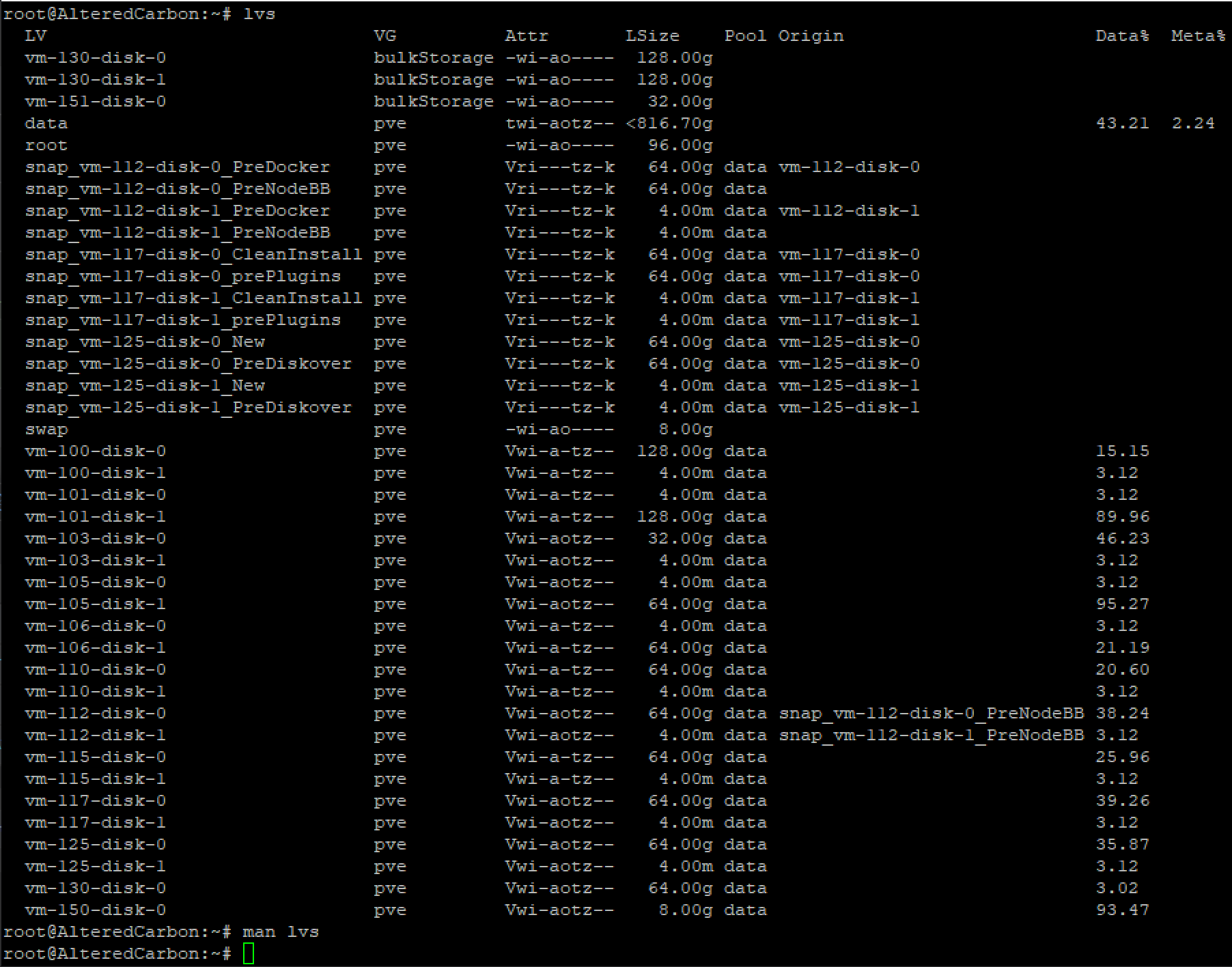

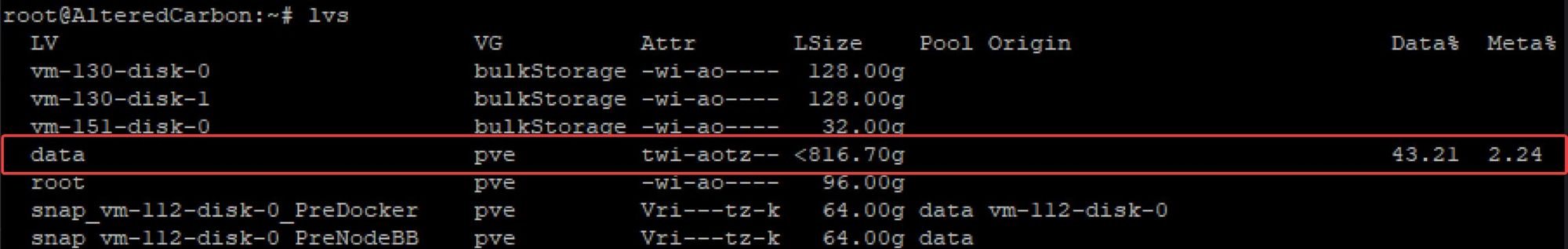

lvsWhich will give you something like this:

Looking at the row for the data logical volume (LV), we see that the storage used (Data%) is 43.21%. 43.21% of 816.7 is 352.896 GiB. Or 378.92 GB. The same number shown above in Grafana:

Additionally, by paying attention to the "Data%" column, the lvs command can be used for identifying the worst offenders when it comes to storage utilization (take a look at VM 105 for example). This can not only be used to identify potentially problematic VMs for further investigation, it can also be used to determine what VMs may need their storage allocation expanded.

It turns out that what was causing this high volume utilization was common to all of my VMs (more on that later), but for now, I'm going to focus on the VMs that I had specifically identified with high storage growth.

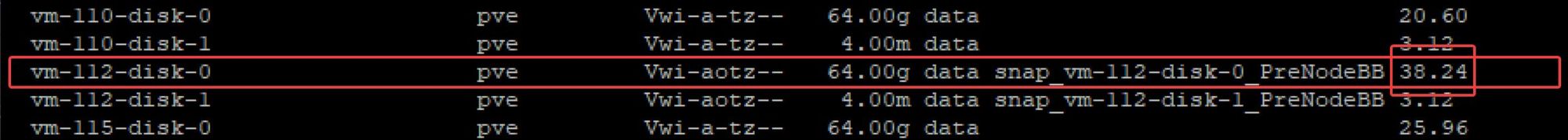

Looking at VM 112, we see that Proxmox is reporting that 38.24% of the volume's 64 GiB is in use (26.27 GB). Suspicious because, inside the VM itself, it's only reporting 10.7 GB in use (another clue to be discussed later).

Monitoring Data Growth

Diskover

I first wanted to identify the source of each VM's data growth. Was there a certain application/directory causing all of this storage growth?

The best tool I have found for this kind of granular investigation of storage growth is Diskover. Diskover gives great visualizations showing storage usage and storage growth with its heat maps. I used the following guide for deploying Diskover to monitor my VMs:

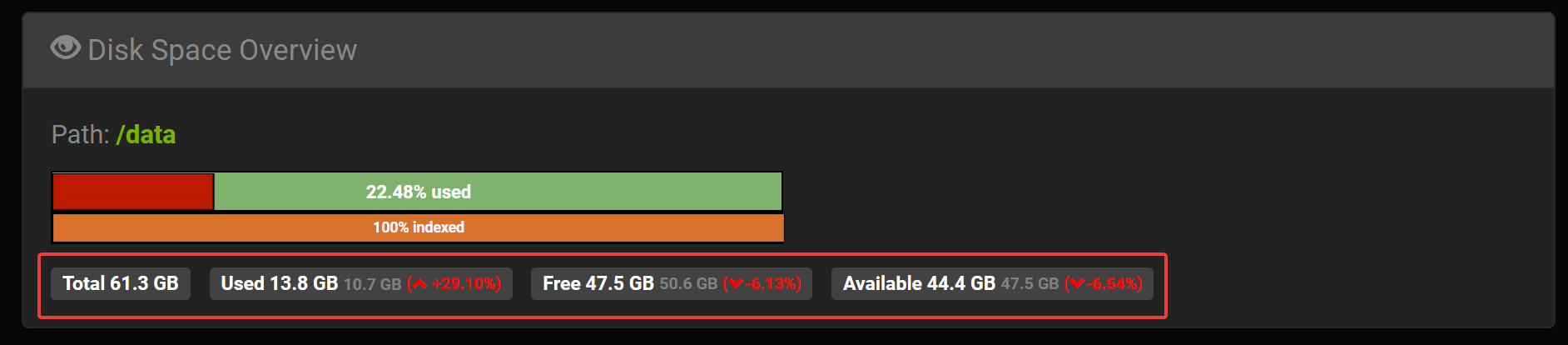

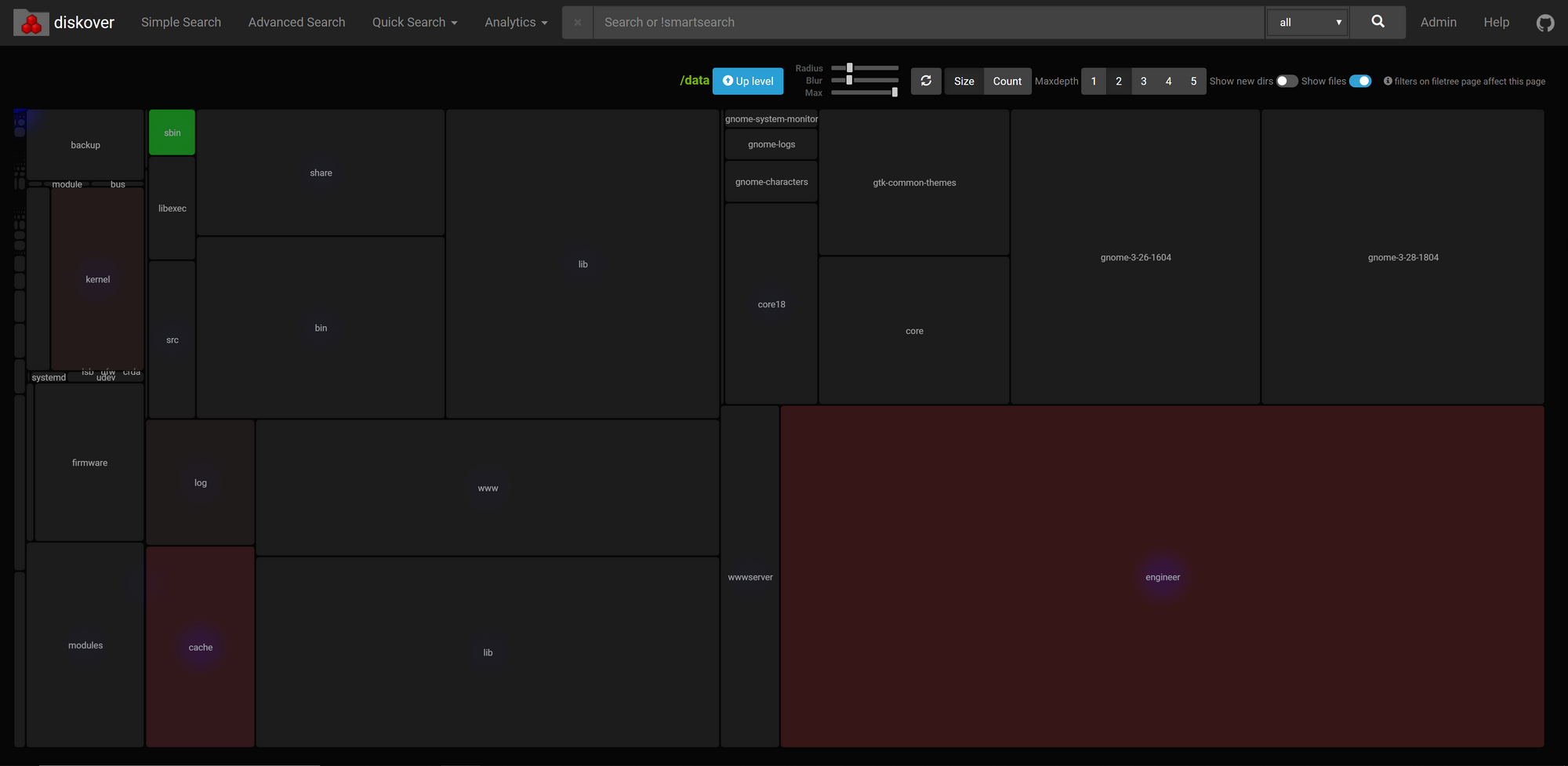

I monitored VM 112 with Diskover over a similar time period and obtained the following data after a week:

Here we see a data growth of 29.10% (or 3.1 GB) after a week. However, we have to keep in mind that Diskover stores it's data in an elasticsearch database which itself takes up a large amount of storage. Drilling down further with Diskover:

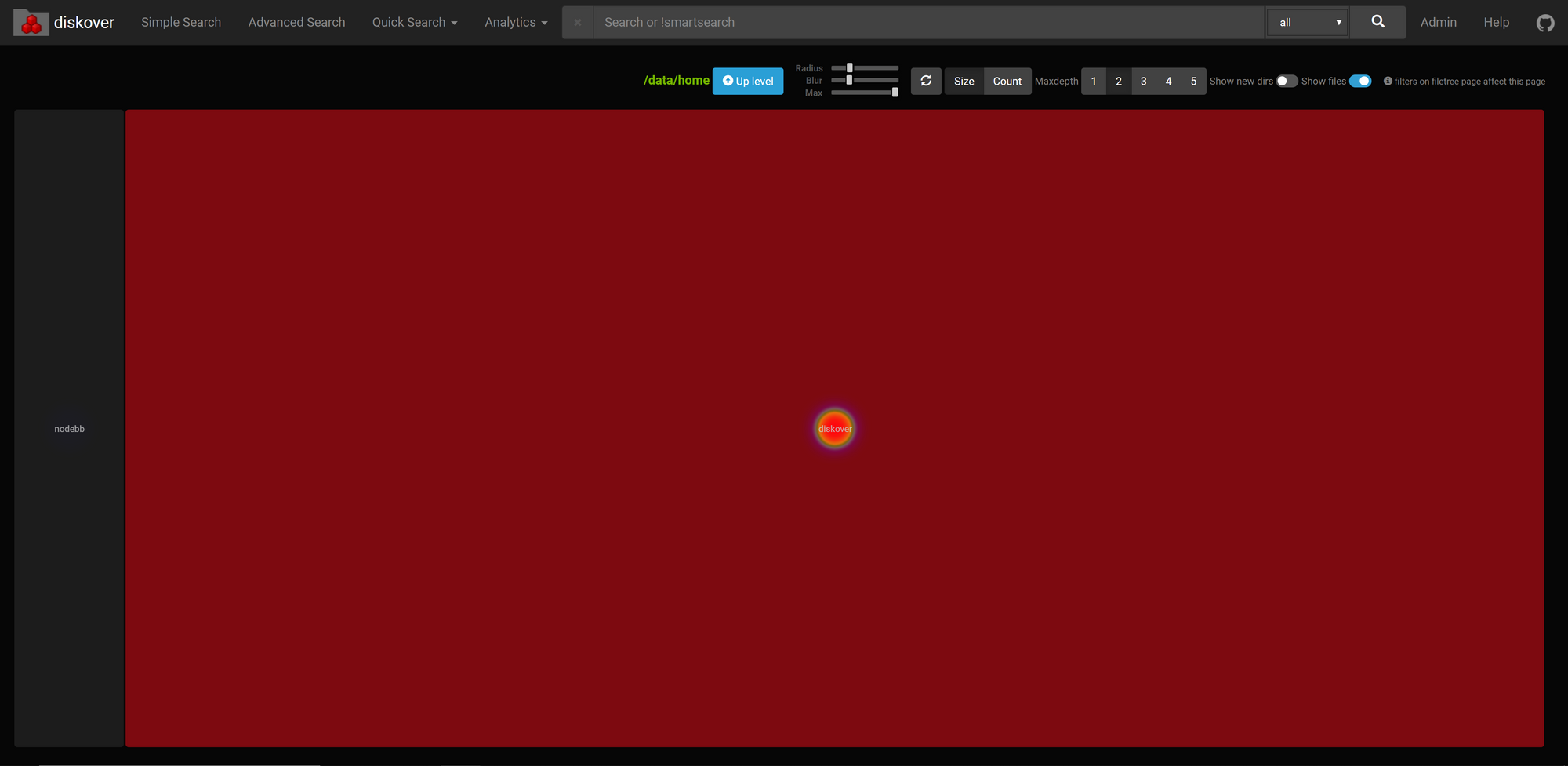

Looks like "engineer" (my home folder) is taking up a lot of space (determined by the relative size of the engineer box) and has grown significantly. Let's drill down further on that:

Oh, diskover has grown the most in this time. By almost 3.1 GB actually (shown by hovering over in Diskover; sorry it's not the in the screenshot).

So, with an overall VM storage growth of 3.1 GB, almost all of which is attributable to the diskover monitoring itself, it appears that this VM's disk usage isn't growing at all. What the heck is going on?

The Problem and the Resolution

Let's summarize the problem and what we've found so far:

- Proxmox is telling us that our LVM thin pool is being used up: storage use is growing. We see this in Grafana and lvs.

- When we monitor disk usage inside the VM itself with Diskover, we don't see any disk usage growth.

How is this discrepancy possible? Is something lying to Proxmox about how much storage our VMs are actually using? Well, it turns out, yes, and we actually already saw this when we ran lvs:

Remember how I said that lvs showed that our VM was using 38.24% of its allocated 64 GiB (i.e. 26.27 GB) and yet inside my VM itself it was only showing 10.7 GB used? That paradox was the big clue.

So what is going on here?

Well, when you use thin provisioning, only the VM's filesystem knows what storage blocks actually are and are not in use. In other words, only the VM can tell Proxmox what storage it's truly using.

How do we resolve this?

Trim/Discard

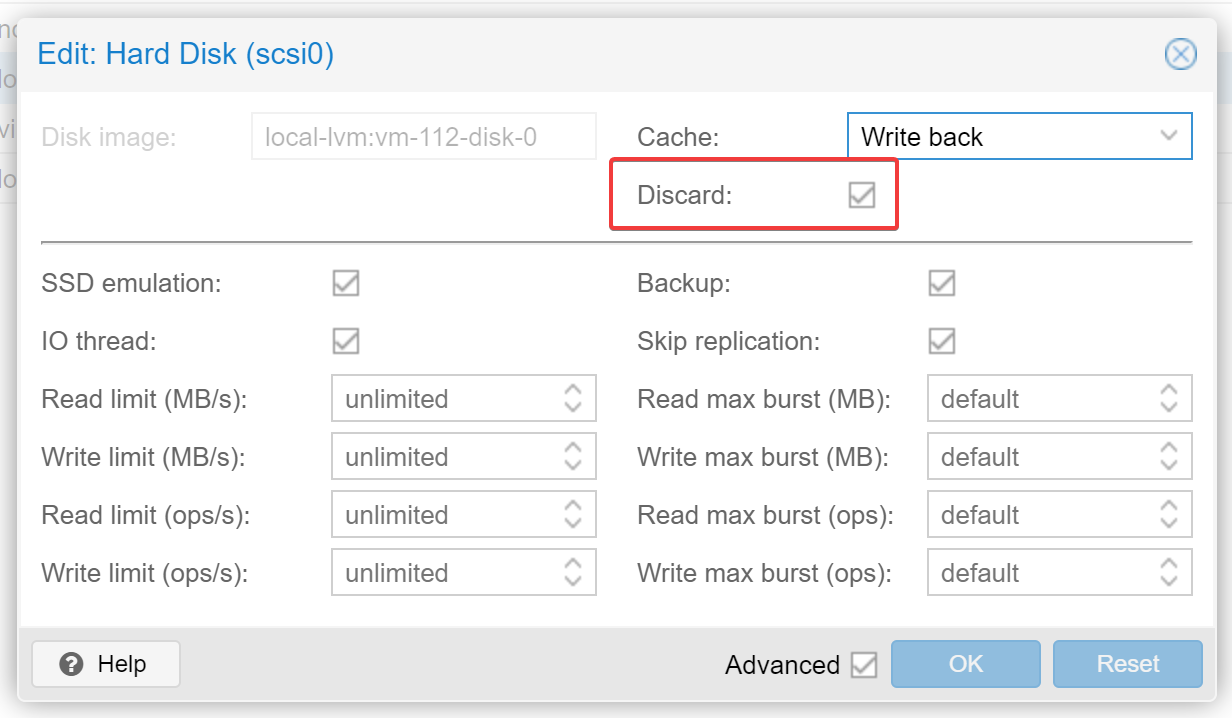

It turns out there's a setting that needs to be set when adding the storage to the VM. That setting is the "Discard" option which should be checked:

Proxmox has this to say about the Discard option in their documentation:

Trim/Discard

If your storage supports thin provisioning (see the storage chapter in the Proxmox VE guide), you can activate the Discard option on a drive. With Discard set and a TRIM-enabled guest OS [25], when the VM’s filesystem marks blocks as unused after deleting files, the controller will relay this information to the storage, which will then shrink the disk image accordingly. For the guest to be able to issue TRIM commands, you must enable the Discard option on the drive. Some guest operating systems may also require the SSD Emulation flag to be set. Note that Discard on VirtIO Block drives is only supported on guests using Linux Kernel 5.0 or higher.

Source: Proxmox VE Administration Guide

I had managed to miss this setting and had not applied it across all of my VMs. Setting the Discard option (and running fstrim within the VMs) resolved the problem:

Pretty significant eh?

So, be sure to make sure "Discard" is checked if you're using thin provisioning and ALWAYS monitor your storage.

You have now learned about the tools available to you for investigating a I hope you found this article interesting. Let me know what you think in the comments below! As always, if you have any questions, please let me know!